Hi,

I joined the University of Essex as a member of the technical support team in 1986 after studying electronics and telecommunications at college. After many exciting and interesting years being involved in various research projects and supporting students at many different levels I felt the time was right to pursuit some research of my own if possible. The has resulted in the completion of an MSc by research and current plans to continue by way of PhD.

My MSc project was related to Intelligent Environments and investigated the localized determination of abnormal or unusual behavior in an intelligent surface (iSurface) composed of a reprogrammable amorphous computing array. My supervisor was Dr. Martin Colley with co-supervisors Prof. Vic Callaghan and Dr. Graham Clarke.

Malcolm Lear 03-01-2017

MSc Research:

MSc work concludes successfully late 2015. The research lead to the development of a novel new technique that could determine in a responsive, non intrusive way small changes to program code and execution behavior. Applications include fault tolerance in amorphic computing and security in embedded systems.

MSc Research:

Work continues on my MSc delivering a solution to the fundamental problem of determining processor behavior with minimal or zero impact on performance. Further, the utilization of hardware to monitor performance by way of the JTAG interface coupled with a novel form of sampling creates a technology suitable for a wide range of applications in various fields including the highly valued area of embedded security.

MSc Research:

Work commences on my MSc on a part time basis whilst maintaining the Embedded Systems Laboratory, supporting students on both hardware and software issues.

ESL Infrastructure:

Development continues on various plug-in boards for the new embedded systems board including a JTAG programmer and motor control board.

Embedded Systems Laboratory (ESL):

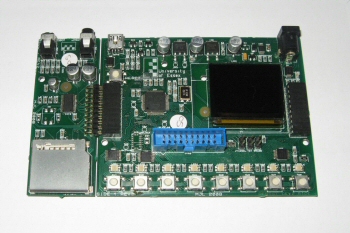

In 2008 Prof. Ian Henning formally divided robotics and embedded systems. All robots not using embedded operating systems i.e. Windows, Linux etc were moved across to the robot arena and the Brooker Laboratory became the Embedded Systems Laboratory. This gave us the opportunity to develop our own embedded system boards to our own specification and I accepted the job to do the work. The system was to be modular, very flexible and utilise an ARM processor as most microcontroller manufacturers supported this architecture and was becoming ubiquitous in embedded devices such as smart phones. The specification was quite high but I managed to tick all the box's and prototypes were up and running by mid 2008. An outside company (Circad Design Ltd) was then contracted to manufacture 50 units duly delivered by the autumn term. Of particular note was the use of a full colour, high resolution OLED display. Attention then turned to developing smaller peripheral boards, including 24 bit audio, SD Slot, Network, Serial and Colour Camera.

EMG Spot Board:

Also in 2008 a PhD student required a multichannel EMG sensor with additional force and positional transducer input to connect to a SunSpot. These devices are usually large, expensive and at the time normally found only in medical facilities. However I managed to design a multi channel unit about the size of a box of matches. I've was informed that it out performed typical large professional devices.

SunSpot Rovas:

We received several preproduction small microcontroller boards from Sun Microsystems called SunSpots. These were small wireless enabled battery powered modules with various built in sensors. It seemed a good idea to utilize these boards in an updated Rova robot, well at least engineer in the option. This was done successfully by the end of 2007 and was done in such a way that switching between the 68000 CPU board running VxWorks and the new SunSpots running Java code could be done in less than 5 minutes.

Bio or Emotion Sensor:

A research project required a small emotion sensor connected to a SunSpot. I developed a sensor that sensed body temperature, perspiration, heart rate, and blood pressure all picked up by a single finger.

The New iSpace:

The iDorm2 changes name to the iSpace to emphasize that it's about all living environments not just bedroom and study. I was involved in discussions on infrastructure and investigated serial bus protocols and other relevant communication technology that would be required to get small embedded systems communicating with effectors, sensors and each other. The overall idea was to distribute the intelligence by running 'agent' software on small sub systems.

Large Robot for iDorm2:

The intelligent environments project (iDorm1) was now moving to a new purpose built flat initially called the iDorm2. There was a request for a Marvin type robot with more features, larger, longer running with robotic arm and colour vision. Construction commenced late 2004.

New Big Robots:

The original 4 Marvin robots needed to be updated and two more needed to be built. Component obsolescence and advances in technology led to a board level redesign using the latest CPLD's resulting in greater performance and lower power consumption. The main drive motors were changed to DC rather than the steppers used before. These were driven by a novel CPLD circuit that emulated stepper motor operation with step loss memory thus maintaining software compatibility and enjoying the performance boost both in speed and torque provided by DC motors whilst maintaining the positional accuracy afforded by steppers.

Ultrasonic Navigation:

The Marvin redesign allowed me to experiment with the possibility of gaining positional information from the ultrasonic range finding system. The original system I designed was already novel in that it was synchronized with a global radio clock transmitted to all the robots in the lab to avoid ultrasonic interference between the many range finders. By redesigning the ultrasonic boards each robot could not only determine reflection times but also distance from other range finders (other robots). The system proved very successful and perhaps be usefully deployed in future swarm behaviour research.

Powered Floor:

A new robotic arena was built in 2002 and a long standing problem with the existing robots was addressed. The issue was how to get continuous power to a robot so that unsupervised long term experiments could be undertaken and eliminate power cables to support equipment. The solution provided by Prof. Ulrich Nehmzow was the electrically powered floor (E-Floor). The mechanical and electrical specifications were decided on, but engineering safety into the system was difficult to realize. I approached the problem and came up with a novel way to continuously monitor the floor for fault conditions. The result was a powered floor that could deliver the very high currents required to charge and power the robots but could detect the very low currents typical of a fault condition and cut the power feeding that section of floor.

Intelligent Environments:

A new long term research project led by Prof. Vic Callaghan started in 2001. The project involves intelligent habitats and the first stage of this research will the construction of a small dormitory with unobtrusive embedded computing creating an intelligent environment called the iDorm. My initial involvement was to design and build some sort of hardware simulation small enough to fit on a desk that the students could program so as to work with a form of intelligent environment on a one to one basis. The system therefore had to possess all the basic environmental systems you would require in a typical habitat such lighting, heating, ventilation etc and associated sensors. The first prototype was completed and 16 units (mDorms) built by the end of the year.

Very Small Robots:

Dr. Huosheng Hu decided it would be a good idea to be actively involved in some sort of robotic competition that would promote the laboratory and create interest and enthusiasm in the students. The recently introduced football competition (RoboCup) involving small (3x3") robots seemed ideal. I designed and built the micro robots within a few months and developed an innovative IR range finding system that was unaffected by ambient light. The main control of the robots is by remote via a PC with an overhead camera. However the latency of such a system led me to design a local system that could locate the ball and guide it by onboard software.

Video Compression:

I had been involved in research starting in 1994 into the possibilities of of using tele-robotic vision to create a security robot, a type of "night watchman" on duty in unmanned premises, such as supermarkets. One of the main requirements for such a system was live video streaming. The solution had to involve video compression since network speeds were quite slow at the time. The compression also had to be done without loading the main CPU thus a separate compression engine had to be designed. We decided that the best solution was to use frame deltas with some sort of run length encoding into a buffer that the CPU could access through the VME bus. My knowledge of video, previous work using programmable logic and the earlier frame grabber all helped in developing what turned out to be a very novel solution. A serious issue turned out to be low level changes of scene that resulted in bad compression ratios. My solution was a modification of the run length encoding that introduced a form of hysteresis, thus ignoring subtle changes but not propagating errors into the frame deltas. The entire compression system fitted into just one CPLD, whilst another handled the VME bus access.

Spin Off:

With the development of the video compression hardware and the CPU board from 1997 an idea formed to produce a small robust stand alone network video system that we could market. To achieve this Prof. Vic Callaghan , Dr. Martin Colley, Paul Chernett, and myself formed a spin off company called netCam. However it proved very difficult to market and it became apparent that selling the idea of a network camera (disruptive technology) to a mature market that had always employed direct video connections was going to be hard work. However we did sell at least 50 units to a global market including customers such as GCHQ in the UK and the Hoover Dam in the US.

Small Robots:

In 1997 it was decided that we needed some compact desktop robots that were compatible in some ways to the larger Marvin robots and used the same VxWorks RTOS. This time we made the decision to develop our own VME based CPU board that could be used in many other projects. I finished the design for the CPU and IO/motor driver boards by the end of the year. These proved to be utterly reliable and none to this day have failed. 20 of these new desktop robots (Rova's) were built and still function.

Hardware Simulators:

The laboratory only had floor room for about six robots so it was decided to build desktop simulators. I fitted out 12 VME racks with identical hardware to the robots and designed a small unit with wheels and beacon scanner that functioned in the same way as the full size robots.

Wireless Networks:

In this period I also designed and built a wireless network with the intention of sharing data between the robots. The system worked but was never used.

The Brooker Laboratory:

I moved from the 3rd year hardware laboratory to the new Brooker laboratory in 1994 to continue development of the robots (now called Marvin robots) and advising students on hardware and low-level software design.

Video Store:

One of the more interesting projects was the development of a vision system capable of grabbing single frames from a video camera for use with the robots. The final design made extensive use of CPLD's and was extremely flexible with sampling rates from 44KHz up to 16MHz, thus enabling it to handle audio or video. This was far in advance of any off-the-shelf VME product at the time. Although only black and white the resolution was so high that PAL/NTSC colour decoding could be achieved in software.

Navigation:

I also developed a robot navigation system employing coded infrared beacons at known locations in the laboratory and infrared head scanners attached to the top of the robots. Using both the beacons unique ID and direction a highly accurate location could be computed.

A BBC 'Look East' Report:

Essex University Brooker Laboratory - The Early Days

The Big Robots:

In 1993 work started on a prototype robot that could be used in a new Intelligent Systems Laboratory proposed by Prof. Vic Callaghan. The robots had to be robust, have a flexible architecture, be modular and employ a reliable OS. All requirements were met and the design was finalized by the end of the year. The robots used the Motorola VME bus and VxWorks RTOS. Four robots were built and fully functional by the time the Brooker laboratory was officially opened in early 1994.

The CAD System:

In 1989 I needed a printed circuit board computer aided design system. Commercial packages just didn't have all the features I required or were simply too expensive, so I wrote my own PCB design package running on a Sinclair QL. This program has been maintained updated and is still extensively used today albeit running on a PC emulation of the SMSQ/E OS.

Transputers:

In this period I was involved in various Transputer projects and decided the IFS could be redesigned to take advantage of the parallel processing approach that Transputers offered. With this in mind a prototype Transputer based associative memory controller was designed and built. This prototype was so successful it was decided to build a much larger 32 bit version called the IFS/2. I worked with Erik James on this project through to 1992. One IFS/2 requirement was a complex 32 bit hasher which I designed around an early Xilinx FPGA programmed first in PALASM and later in VHDL. Compiling the design in its final form took >24 hours!

Update 13-09-2013: Tentative discussions on a new approach to artificial intelligence has suggested an interesting application for the hardware driven associative memory technology developed in 1989. With this in mind, the IFS memory control systems are being re-evaluated and the original hasher design has been compiled into a new Lattice MachXO FPGA. Compilation took <30 seconds!

Arrival:

I first started work at the University of Essex in 1986 taking charge of the 3rd year hardware laboratory.

The Intelligent File Store:

In 1986 Prof. Simon Lavington moved to the University of Essex from Manchester bringing with him his Intelligent File Store (IFS) project. The IFS was essentially a file store utilizing associative memory controlled by dedicated hardware to speed up data searches. I was recommended to Simon by the department as being the ideal engineer to pursue further hardware development. I was actively involved in this research project through to 1992.

People’s living space is becoming rich in electronic devices most of which have computational capabilities undreamt of a few years ago; indeed even humble devices such as the bedroom clock usually utilize embedded systems technology. More often such technology will communicate and interact autonomously as protocols are implemented and refined (Internet Of Things). Of particular interest in the near future are printable electronics and nanotechnology that open up many new possibilities in pervasive computing. Such technology will enable entire surfaces, for example walls to become “intelligent” and “aware” of the environment. Such surfaces (iSurface) can be considered an amorphous computer composed of a large array of identical processing units (iCells) each with its own sensor/effectors. Whilst nano-sized particles interconnecting in an ad hoc way may just be a dream, there are more practical approaches that could have short term applications in intelligent environments. One such approach is a structured array of iCells constructed at more modest scales perhaps making use of new printing methods onto paper. An important requirement of such a surface is the need for a fast, reliable method to determine iCell operation, performance and code integrity. My MSc research aim is to develop a method that can create long (>=32 bit) stable, robust metrics using a profiling technique that represents the current operational state of an iCell and thus enabling the quick exchange of diagnostics between iCells along with data traffic. Behavioral metrics that could highlight faulty sensor/effector hardware for example are meaningless unless the iCell is running correctly loaded program code. Therefore the primary aim of the research was the development of metric of code integrity that was stable even when external events affect program flow within the iCell. Key requirements in the development of this system were fast acquisition of diagnostic variables, minimal affect on normal operation and the possibility of a hardware implementation which could be completely non intrusive in operation. The development of this code integrity metric is described in my paper "Stable Metrics in Amorphous Computing: An Application to Validate Operation and Monitor Behavior". The developed system fulfilled all research goals and in particular provided a novel method to create a stable metric that could determine compromised or incorrectly loaded program code. The metric of code integrity had both attributes of stability and responsiveness to change, something that has proven difficult to attain before. The uniqueness of the metrics produced by the hardware was also investigated and was determined to be very good with efficient use of metric bit length. Impact on processor performance was also deemed acceptable at 2.31% with an architecture that could theoretically be implemented in ‘system on chip’ (SOC) and zero processor overheads.

Malcolm Lear 03-01-2017

Thesis: Determination of Correct Operation and Behavior of a Structured Amorphous Surface

Abstract-

A recurring theme in intelligent environments is the intelligent surface composed of nanoscale processing units (smart dust). Such a surface (iSurface) can be considered an amorphous computer composed of a large array of identical processing units (iCells) each with its own sensor/effectors. An important requirement of such a surface is the need for a fast, reliable method to determine iCell operation, performance and code integrity. Any practical solution must fulfil certain criteria. First the impact on intercellular data communication bandwidth must be kept to a minimum, this is particularly important in high density, high speed iSurface applications such as high resolution video display. Previous work on processor profiling offered a possible solution in the form of metrics derived from profiling. This thesis describes a method developed to create long (>=32 bit) stable, robust metrics using a profiling technique that represents the current operational state of an iCell and thus enabling the quick exchange of diagnostics between iCells along with data traffic. Key requirements in the development of this system were fast acquisition of diagnostic variables, minimal affect on normal operation and the possibility of a hardware implementation which could be completely non intrusive in operation.

The hardware developed fulfilled all these criteria in particular a novel method to create a stable metric that could determine compromised or incorrectly loaded code was developed. The metric of code integrity had both attributes of stability and responsiveness to change, something that has proven difficult to attain before. The uniqueness of the metrics produced by the hardware was also investigated and was determined to be very good and metric bit length was efficiently used. Impact on processor performance was also deemed acceptable at 2.31% and the developed architecture could theoretically be implemented in ‘system on chip’ (SOC) with zero processor overheads.

IE13: Stable Metrics in Amorphous Computing: An Application to Validate Operation and Monitor Behavior

Abstract-

A recurring theme in intelligent environments is the intelligent surface composed of nanoscale processing units (smart dust). Such a surface (iSurface) can be considered an amorphous computer composed of a large array of identical processing units (iCells) each with its own sensor/effectors. Whilst nano-sized particles interconnecting in an ad hoc way may just be a dream, there are more practical approaches that could have short term applications in intelligent environments. One such approach is a structured array of iCells constructed at more modest scales perhaps making use of new printing methods onto paper. An important requirement of such a surface is the need for a fast, reliable method to determine iCell operation, performance and code integrity. This paper describes a method to create long (>=32 bit) stable, robust metrics using a profiling technique that represents the current operational state of an iCell and thus enabling the quick exchange of diagnostics between iCells along with data traffic. This paper looks at how stable diagnostic metrics and in particular a metric of code integrity can be created even when external events affect program flow within the iCell. Key requirements in the development of this system were fast acquisition of diagnostic variables, minimal affect on normal operation and the possibility of a hardware implementation which could be completely non intrusive in operation. The described method can create several types of metrics, allowing quick determination of for example, code validation, abnormal operation and unusual behavior.

WOFIE13: Putting the Buzz Back into Computer Science Education

Abstract-

This paper describes a rapid-prototyping system, based around a set of modularised electronics, Buzz-Boards, which enable developers to quickly create a wide variety of products ranging from intelligent environments, through robots to smart-phones peripherals to be built and deployed. In this paper we introduce readers to Buzz-Board technology, illustrating its use through three examples, a desktop robot (BuzzBot), a desktop intelligent environment (BuzzBox) and an Internet-of-Things application using a Raspberry Pi adaptor (BuzzBerry). As part of this paper we provide a general overview of Computer Science curriculum developments and explain how Buzz-Boards technology can provide a highly motivating and effective focus for computer science practical assignments. This paper adds to earlier BuzzBoard publications by describing support for Raspberry Pi's and intelligent environments, together with reviewing the latest developments in computer science curricula in the USA and UK.

iC2011: Teaching Next Generation Computing Skills: The Challenge of Embedded Computing

Abstract-

As new paradigms, such as pervasive computing and ambient intelligence change the nature of the computer application market place, so do the skills and educational technology needed by students and teachers. The once dominant PC now finds itself outnumbered by several orders of magnitude by much smaller and cheaper embedded computers which can extend the internet from being just a means of connecting personal computers together, to enabling a world of connected "things"; the so-called "internet of things". In this world hundreds and even thousands of tiny service providing embedded-computers deliver a variety of services to us (some physical, some information) in ways that enable exciting new lifestyles and business opportunities. Applications range from the creation of digital homes (with their myriad of high-tech appliance), smart vehicles (cars aeroplanes & robotics) through to telecommunications (mobile phones, personal navigation or mobile learning). At the heart of this new technology is the "embedded-computer" which, from a software developers perspective, is significantly different to conventional desktop computers as they need to operate in real-time with computationally small resources and, in general, don't have inbuilt keyboards, displays or programming tools. All these factors conspire to bring significantly different educational and training requirements from older generations of computers, such as PCs. In this paper we examine these issues and introduce a set of modularised tools to arm universities, and other training institutions, with the means to support the acquisition of these key skills by students in a motivating and educationally sound way.

CS10: We all wear dark glasses now

Abstract-

The story describes a world of the future in which there are two entirely different groups of people, one involved in the development and reproduction of the industrial base of the country and ruled by a centrally controlled virtual reality that is subject to constant innovation through a totally controlling fashion machine. The ruling group who control and maintain the other group have access to the full richness of the technology they administer and use to keep the world in order and are free to experience reality in an entirely different way. This is about the way that a breakdown in the system is dealt with and is told from the perspective of a third party observing these two groups.

FPGA T&I: Comparing Several Implementations of a Hardware Hasher Unit

Abstract-

As part of the design of a knowledge-base server (Refs. 1, 2), there arose a requirement for a hardware sub-unit which could perform a general hashing function on variable length tuples. The knowledge-base server uses transputers for its internal control. The hardware hasher unit replaces an OCCAM procedure which took an unacceptably long time to execute (about 10 microseconds).The target time was under 160 nanoseconds - (see below).

The first implementation of the hardware hasher was carried out using conventional GAL technology. This worked at acceptable speed (order of 40 nanoseconds) but the nine GAL chips took up a relatively large amount of PCB area. Hasher designs have since been implemented in Xilinx 3000 series FPGA technology, Altera MAX series 5000 technology, Actel ACTI technology, National MAPL technology, AMD MACH technology, and Lattice Semiconductor pLSI technology. In this paper we compare the speed, cost and PCB area for all seven designs. We also comment generally on the suitability of each candidate technology for this type of task, and then draw some conclusions which may help to guide future designs in other applications areas.

AoT: A Modularly Extensible Scheme for Exploiting Data Parallelism

Abstract-

Non-numeric (e.g. symbolic) information systems contain much natural parallelism. This parallelism becomes evident in frequently-occurring tasks such as pattern-directed search and set intersection. We describe an add-on unit, based on transputers, which exploits data parallelism by performing set and graph operations in situ within an associative memory frame-

Embedded Systems Laboratory

School of Computer Science & Electronic Engineering

University of Essex

Wivenhoe Park

Colchester

Essex, CO4 3SQ

UK